A landmark MIT study of 17,000 worker evaluations across 3,000 real-world tasks reveals that AI is not replacing jobs in sudden waves of disruption. It is rising steadily across all occupations at once. By 2029, AI models will likely complete 80% to 95% of text-based work tasks at minimally sufficient quality. The implications for workers, companies, and policymakers are fundamentally different from what most forecasts have assumed.

In April 2026, researchers at MIT FutureTech published a study that reframes how we should think about AI automation. The paper, “Crashing Waves vs. Rising Tides,” by Matthias Mertens, Neil Thompson, and seven co-authors, presents what may be the most comprehensive empirical evaluation of AI’s ability to perform real-world labor tasks to date (Mertens et al., 2026). Using more than 17,000 blind evaluations by workers with actual on-the-job experience, the study tested 41 different AI models against over 3,000 tasks drawn from the U.S. Department of Labor’s O*NET occupational database. The central finding challenges a dominant narrative in AI policy discussions: automation is not arriving as sudden, industry-destroying “crashing waves.” It is arriving as “rising tides,” a broad, steady, and simultaneous improvement across nearly every occupation.

The Study: What MIT Actually Measured

The methodology is what makes this study significant. Rather than relying on expert surveys, employer projections, or theoretical modeling, the MIT team recruited thousands of workers through the Prolific research platform, each with at least six months of on-the-job experience in the occupation being evaluated. Workers were shown AI-generated responses to realistic task instances drawn from their own field, without being told the responses were generated by AI. They rated each response on a 1-to-9 scale, where 7 or higher indicated the output was “useful as is” at minimally sufficient quality, requiring no edits (Mertens et al., 2026).

The scale of the evaluation is notable: 11,536 tasks, each evaluated against 5 different AI models, producing 69,216 task instances. After rigorous quality filtering that excluded approximately 35% of responses for attention failures, inconsistencies, or implausible completion times, the final analysis included 17,205 high-quality evaluations across 22 major occupation families. The 41 models tested ranged from older systems like GPT-3.5 Turbo and Llama 2 to frontier models including GPT-5, Claude Opus 4.1, Gemini 2.5 Pro, and DeepSeek R1 (Mertens et al., 2026).

| Study Parameter | Value |

|---|---|

| Total Tasks Evaluated | 3,000+ (from 11,768 O*NET tasks screened) |

| Worker Evaluations | 17,205 (after quality filtering) |

| AI Models Tested | 41 (June 2023 to August 2025) |

| Occupation Families | 22 major categories |

| Task Duration Range | 10 minutes to several weeks (median: 2.5 hours) |

| Overall Success Rate | 60% (minimally sufficient, score of 7 or higher) |

| Mean Quality Score | 6.88 out of 9 |

The Core Finding: Rising Tides, Not Crashing Waves

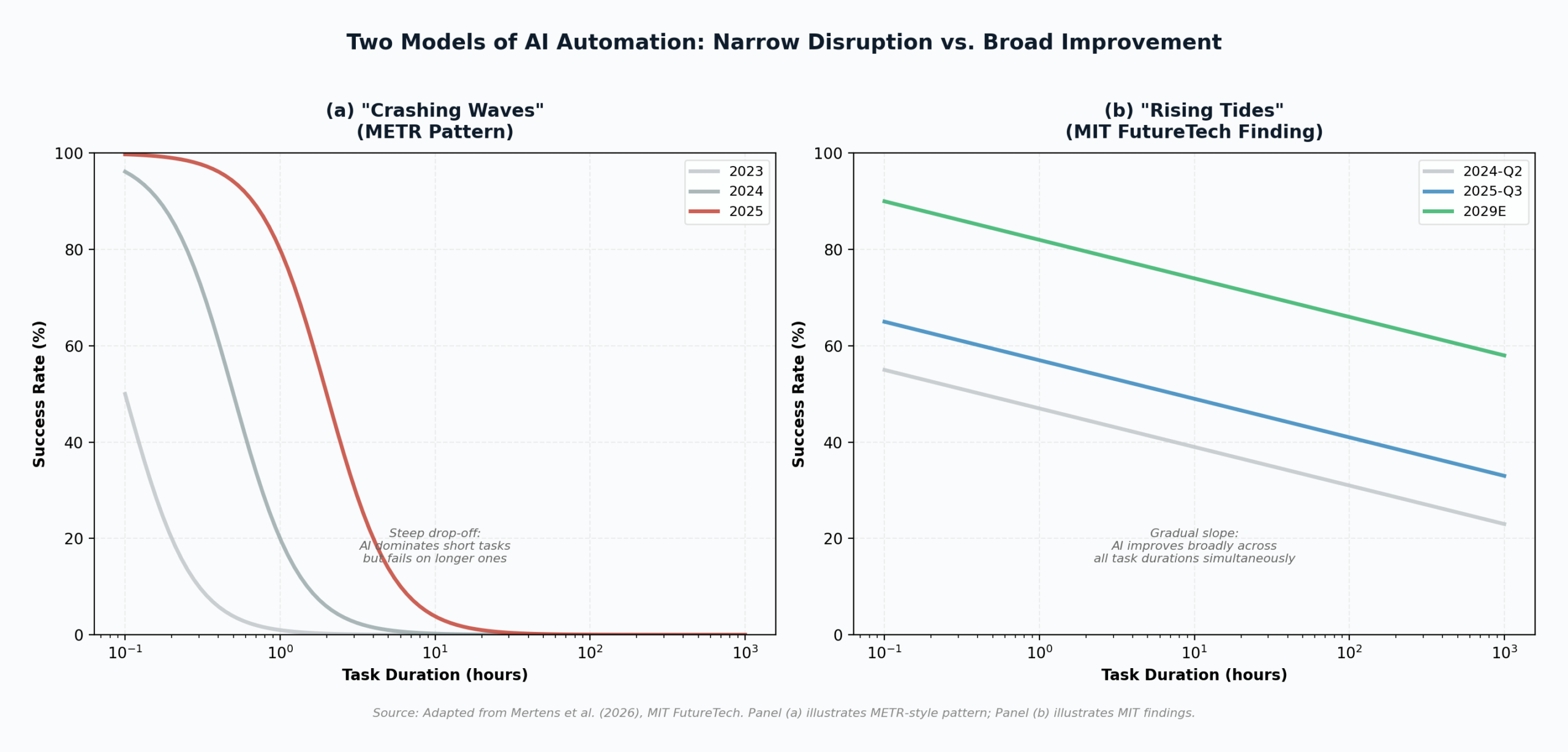

The study’s central contribution is distinguishing between two patterns of AI automation. “Crashing waves” describe a scenario where AI capabilities surge abruptly to dominate a narrow set of tasks while failing at everything else, then the frontier of capability shifts rapidly rightward to the next set. “Rising tides” describe a scenario where AI improves more gradually and uniformly across a broad range of tasks simultaneously (Mertens et al., 2026).

The distinction matters because it has profoundly different implications for labor markets. Crashing waves suggest that specific occupations will be wiped out suddenly while others remain untouched, a pattern that creates concentrated, acute disruption. Rising tides suggest that every occupation will be affected gradually and simultaneously, creating a diffuse, chronic transformation that is harder to see in any single job category but ultimately more pervasive.

The MIT data strongly supports the rising tides model. The pooled logistic regression shows a relatively flat slope of negative 0.31 in the relationship between task duration and AI success. For every ten-fold increase in task duration (for example, moving from a 30-minute task to a 5-hour task), the success rate drops by only about 7.6 percentage points from a 60% baseline. This is strikingly different from the METR study’s findings on 170 research and software engineering tasks, which showed a much steeper decline, indicating that AI dominated short tasks but failed rapidly as complexity increased (METR, 2025).

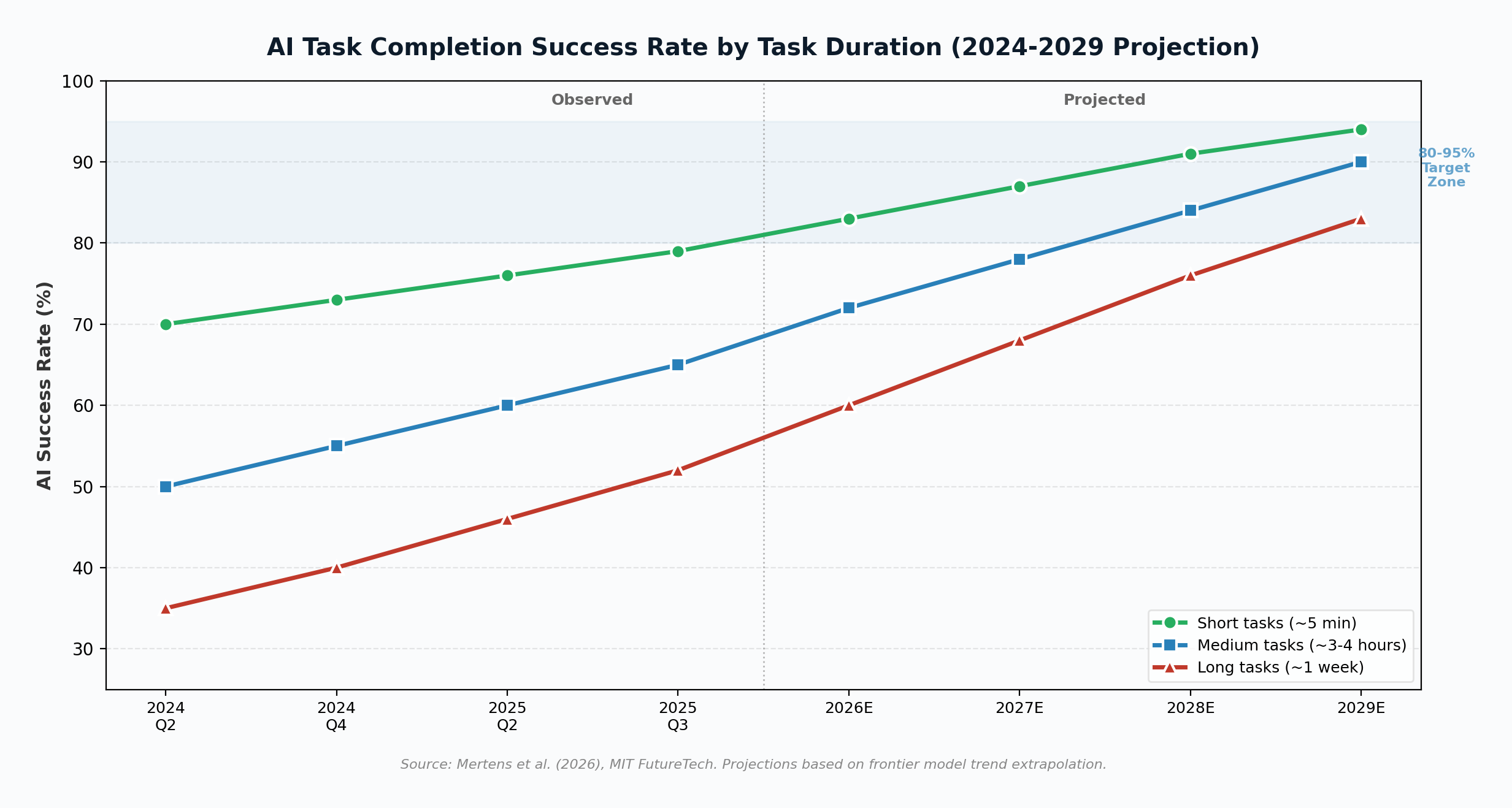

The Numbers: How Fast AI Is Improving

The time-series analysis in the paper reveals a striking pace of improvement. In the second quarter of 2024, frontier AI models could complete tasks that take a human approximately 3 to 4 hours with about a 50% success rate. By the third quarter of 2025, that same 50% success threshold had shifted to tasks taking a full work week. The overall success rate for 3-to-4-hour tasks climbed from 50% to approximately 65% in just 15 months (Mertens et al., 2026).

The researchers estimate that the doubling time for AI capability, measured as the task duration at which models achieve a fixed success rate, is approximately 3.8 months. The failure rate halving time ranges from 2.4 to 3.2 years depending on task duration. If these trends persist, the paper projects that by 2029, LLMs will complete most text-related tasks with 80% to 95% success at minimally sufficient quality. Reaching near-perfect success rates, or achieving comparable success at superior quality, would require several additional years beyond that (Mertens et al., 2026).

Key Finding

Newer AI models create a parallel upward shift in the success curve, meaning they improve performance approximately equally regardless of whether a task takes 5 minutes or 24 hours. Larger models (over 100 billion parameters) create an outward rotation, meaning they disproportionately improve on shorter tasks. This distinction is critical: the benefit of scale is concentrated, but the benefit of each new generation is broad.

Which Occupations Are Most Affected?

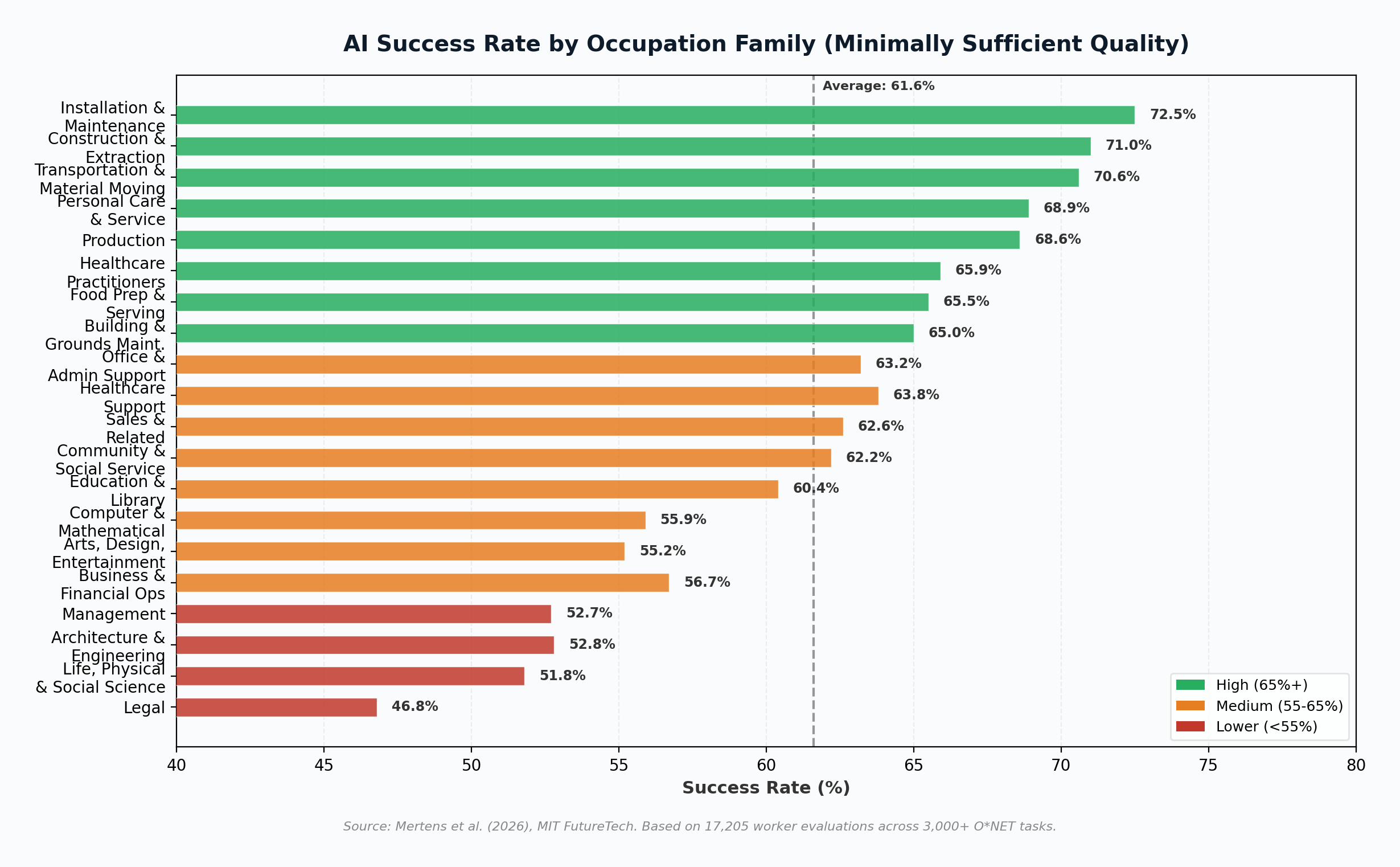

One of the study’s most counterintuitive findings is which occupation families showed the highest AI success rates. Installation and Maintenance (72.5%), Construction and Extraction (71.0%), and Transportation and Material Moving (70.6%) topped the list, not because AI can physically perform those jobs, but because the text-based tasks associated with those jobs, such as writing safety reports, preparing inspection documentation, and creating training materials, are highly amenable to LLM automation (Mertens et al., 2026).

At the other end, Legal (46.8%), Life, Physical, and Social Science (51.8%), and Management (52.7%) showed the lowest success rates. These categories involve longer, more sequentially dependent tasks that require sustained reasoning across multiple steps. Notably, Personal Care and Service showed the steepest negative slope (negative 0.93), meaning AI success drops most dramatically as task duration increases in that category, suggesting a sharper divide between simple and complex caregiving tasks.

The critical observation is the compressed range. The difference between the highest-performing and lowest-performing occupation family is only 25.7 percentage points (72.5% vs. 46.8%). No occupation family is immune, and none is fully dominated. This is the rising tides pattern: AI is not obliterating one industry while leaving others untouched. It is lifting capability across the board.

Our Analysis: What This Really Means

The MIT study provides the empirical foundation. But the implications extend well beyond what the paper itself claims. Drawing on broader labor market data and enterprise adoption evidence, several conclusions emerge.

1. The “Safe Job” Narrative Is Collapsing

For years, public discourse around AI automation has centered on identifying which jobs are “safe” and which are “at risk.” The MIT data invalidates this framing. If AI is improving across all text-based tasks simultaneously, the question is not which occupations will be disrupted but how quickly each will be transformed. The World Economic Forum’s Future of Jobs Report 2025 projects that 39% of core job skills will change by 2030 and that 59% of workers will need to upskill or reskill to remain competitive (World Economic Forum, 2025). The MIT data suggests this may be conservative. If 80% to 95% success rates on text-based tasks are achieved by 2029, the skill transformation will affect nearly every knowledge worker, not just the traditionally “exposed” categories.

2. “Minimally Sufficient” Is Not a Low Bar

A 60% success rate at “minimally sufficient quality” may sound modest. It is not. The MIT definition of minimally sufficient (score of 7 or higher) means the output requires no edits and can be used as delivered. At 60%, this means that for a majority of tasks in most occupations, a human worker can prompt an AI, receive a usable output, and move on without rework. Even at 50%, the AI is functioning as a coin flip that, when it lands correctly, saves the worker the entire task duration. For a 3-hour task, that is 1.5 hours of reclaimed productivity on average, with additional time savings from using the AI output as a starting point even when it fails to meet the threshold. McKinsey estimates that 57% of current U.S. work hours involve tasks that could theoretically be automated with today’s technology (ALM Corp, 2026). The MIT data provides the empirical granularity that McKinsey’s theoretical estimate lacked.

3. The Adoption Gap Is the Real Variable

The paper’s most important caveat may be its most understated: “These AI capability improvements would impact the economy and labor market as organizations adopt AI, which could have a substantially longer timeline.” This is the critical variable. Capability and adoption are not the same thing. As of Q1 2026, 72% of enterprises have at least one AI workload in production, up from 55% in 2024, but only 28% describe their adoption as “mature” (McKinsey, 2026). The average enterprise runs 4.2 AI models in production, up from 1.9 in 2023 (Gartner via Medha Cloud, 2026). Enterprise AI budgets grew 22% year over year, and organizations report an average 37% productivity improvement in AI-augmented roles (Medha Cloud, 2026).

These numbers are accelerating, but they still describe an early-adoption curve. The historical pattern from prior technology waves suggests that enterprise adoption typically lags capability by 5 to 10 years. If the MIT projections on AI capability are correct, the 2029 capability frontier will not translate into 2029 labor market transformation. It will translate into 2032 to 2035 labor market transformation, as organizations build the systems engineering, workflow integration, and management practices needed to deploy AI at scale.

4. Young Workers Face Asymmetric Risk

The rising tides model has a particularly troubling implication for early-career workers. If AI can already perform 60% of text-based tasks at acceptable quality, the tasks most likely to be delegated to AI are precisely the entry-level, routine, text-heavy tasks that traditionally served as the training ground for young professionals. Goldman Sachs data shows that among 22-to-25-year-olds in AI-exposed roles, employment fell 16% from late 2022 to mid-2025, while experienced workers in the same fields remained largely stable. Among young software developers specifically, the decline was nearly 20% (ALM Corp, 2026). CNBC reported that postings for entry-level jobs declined approximately 35% since January 2023 (ALM Corp, 2026). The rising tides model explains this pattern: AI is not replacing senior professionals whose judgment integrates years of contextual knowledge. It is replacing the structured, teachable tasks that organizations used to assign to junior staff.

Critical Question

If AI replaces the tasks that historically trained junior workers, how will organizations develop the experienced professionals they still need? The rising tides model does not merely threaten current employment. It threatens the pipeline through which future expertise is built. No major institution, whether corporate, academic, or governmental, has published a credible plan for addressing this structural apprenticeship gap.

5. The Net Job Math Is Misleading

The World Economic Forum projects 92 million jobs displaced by 2030 but 170 million new roles created, for a net gain of 78 million jobs globally (World Economic Forum, 2025). Goldman Sachs models suggest 300 million full-time jobs will be “affected” but that the net displacement rate in the U.S. will be 6% to 7% (ALM Corp, 2026). These aggregated figures obscure the asymmetry of impact. The MIT data shows that AI capability is broadly distributed across occupations. But the workers who lose roles and the workers who gain new ones are not the same people, in the same industries, or in the same countries. A data entry clerk displaced in Ohio is not the same worker who fills a new AI ethics officer role in San Francisco. The net-positive framing, while technically accurate at the macro level, can mask severe disruption at the individual, community, and regional level.

The confirmed, attributable AI job displacement data tells a more grounded story. Challenger, Gray & Christmas tracked approximately 55,000 job cuts directly attributed to AI in 2025, out of 1.17 million total layoffs, the highest level since the 2020 pandemic (AIMultiple, 2026). Independent analysis estimates total U.S. AI-attributable job displacement in 2025 at 200,000 to 300,000 positions, representing 0.13% to 0.20% of total nonfarm employment (ALM Corp, 2026). These numbers are small relative to the projected totals, but they are growing, and the MIT data suggests the underlying capability driving displacement is improving at a pace that will compound these effects significantly by 2029.

Conclusion: The Quiet Transformation

The MIT FutureTech study’s most important contribution is not any single data point. It is the reframing. By demonstrating empirically that AI automation follows a rising tides pattern rather than a crashing waves pattern, the researchers have changed what we should be watching for. The danger is not a sudden, visible wave that destroys one industry while leaving others standing. The danger, and the opportunity, is a gradual, invisible tide that lifts AI capability across every occupation simultaneously, transforming jobs not through dramatic displacement events but through the slow accumulation of tasks that no longer require a human.

This makes the transformation harder to detect in real time, harder to mobilize political responses to, and harder for individual workers to prepare for. A crashing wave is dramatic and generates headlines. A rising tide is quiet until it reaches the point where the ground beneath your feet is underwater. The MIT data suggests we are currently in the period where the water is ankle-deep and rising at approximately 8 to 11 percentage points per year. By 2029, for most text-based tasks, it will be chest-high. The organizations, workers, and policymakers who treat this as a gradual transformation requiring sustained, structural adaptation will be better positioned than those waiting for a dramatic disruption that may never arrive in the form they expect.

References

AIMultiple. (2026, April 6). Top 20 predictions from experts on AI job loss. https://aimultiple.com/ai-job-loss

ALM Corp. (2026, March 13). AI job displacement statistics 2026-2030: 60+ data points. https://almcorp.com/blog/ai-job-displacement-statistics/

McKinsey & Company. (2026). The state of AI in early 2026: Gen AI adoption spikes and starts to deliver value. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

Medha Cloud. (2026, March 14). 67 AI adoption statistics for 2026: Enterprise & SMB data. https://medhacloud.com/blog/ai-adoption-statistics-2026

Mertens, M., Kuzee, A., Harris, B. S., Lyu, H., Li, W., Rosenfeld, J., Anto, M., Fleming, M., & Thompson, N. (2026). Crashing waves vs. rising tides: Preliminary findings on AI automation from thousands of worker evaluations of labor market tasks. arXiv preprint arXiv:2604.01363. https://arxiv.org/abs/2604.01363

METR. (2025). Measuring AI ability to complete long-horizon tasks. https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-horizon-tasks/

NVIDIA Blog. (2026, March 9). State of AI report 2026: How AI is driving revenue, cutting costs and boosting productivity. https://blogs.nvidia.com/blog/state-of-ai-report-2026/

World Economic Forum. (2025). Future of Jobs Report 2025. https://www.weforum.org/publications/the-future-of-jobs-report-2025/