With Anthropic now exploring custom silicon, every major AI company is building or planning its own chips. The question is no longer whether Nvidia’s monopoly will erode, but how fast and what it means for the $200 billion AI chip market.

On April 9, 2026, Reuters reported that Anthropic, the creator of Claude and one of the world’s most valuable AI startups, is considering designing its own artificial intelligence chips to reduce its dependence on external suppliers, including Nvidia, Google, and Amazon (Investing.com, 2026). The plans are still in early stages, with no dedicated team or final chip design in place. But the signal is unmistakable: the last major AI company without a custom silicon strategy is now exploring one. Anthropic joins Google, Amazon, Meta, Microsoft, xAI, and OpenAI in what has become an industry-wide movement to break free from Nvidia’s near-monopoly on AI compute hardware. This article examines what Anthropic’s announcement means for artificial intelligence, the broader economy, and Nvidia’s future.

Anthropic’s Strategic Calculus

Anthropic’s interest in custom chips comes at a moment of extraordinary growth. The company’s revenue run rate has surged from $9 billion at the end of 2025 to over $30 billion in early April 2026, with more than 1,000 enterprise customers now spending over $1 million annually (TechCrunch, 2026). Anthropic is capturing more than 73% of all spending among first-time enterprise AI buyers, doubling its large customer base in under two months (Silicon Republic, 2026).

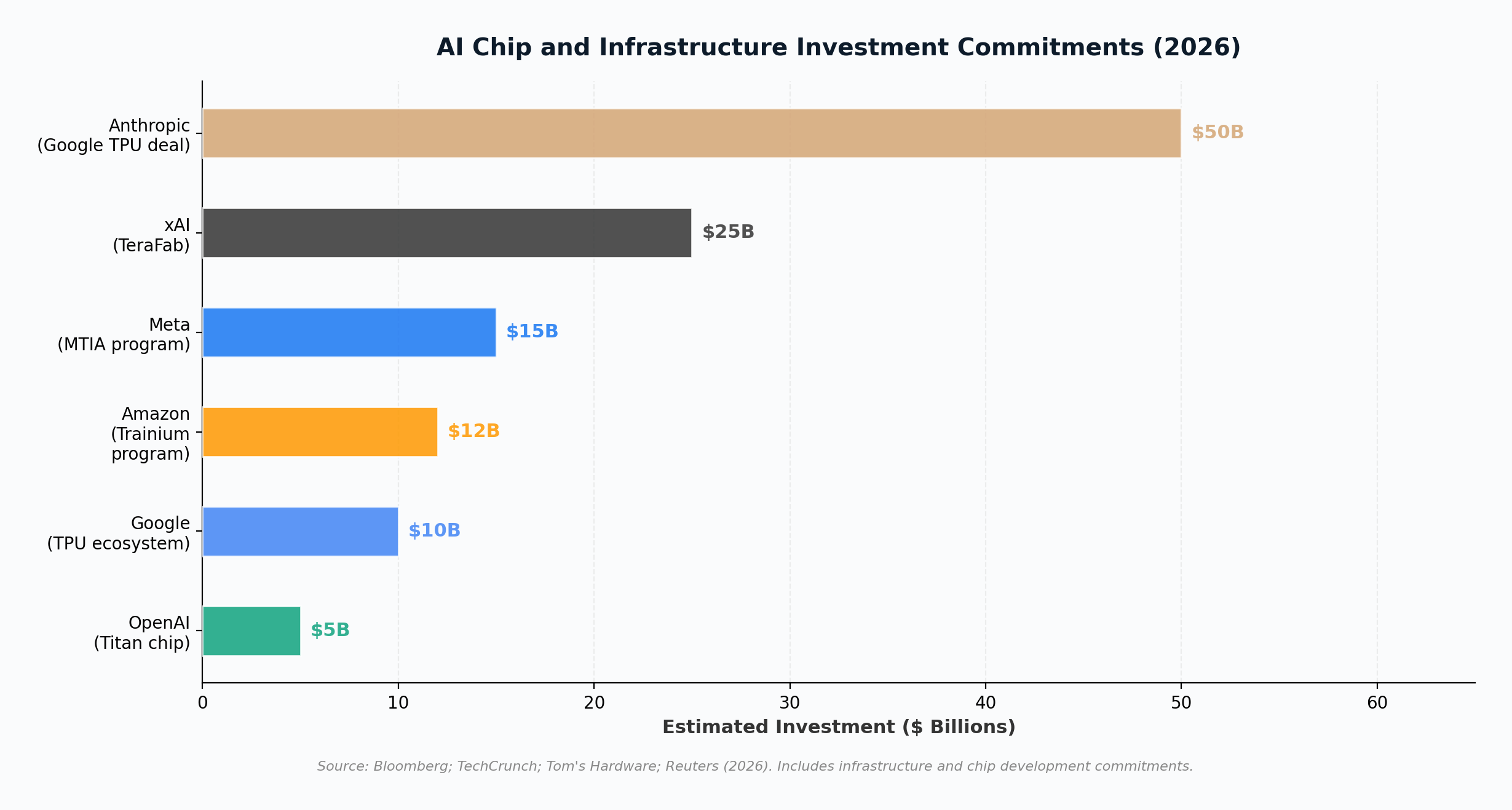

This growth creates an insatiable appetite for compute. Anthropic currently relies on a multi-vendor hardware strategy: Amazon Web Services’ Trainium and Inferentia chips as its primary cloud and training infrastructure, Google’s TPUs through a recently expanded deal worth tens of billions of dollars, and Nvidia GPUs for additional workloads (India Today, 2026). Just days before the custom chip report surfaced, Anthropic announced an expanded agreement with Google and Broadcom for 3.5 gigawatts of TPU capacity through 2031, part of a broader $50 billion commitment to U.S. computing infrastructure (TechCrunch, 2026).

The paradox is clear: Anthropic is simultaneously deepening its relationships with chip suppliers while exploring ways to become less dependent on all of them. The logic is straightforward. At $30 billion in annual revenue and growing, even a modest percentage reduction in compute costs through custom silicon would translate into billions in savings. More critically, chip supply has become the binding constraint on Anthropic’s growth trajectory. Designing its own chips, even partially, would give the company more control over its infrastructure roadmap at a time when every major competitor is doing the same (IO+, 2026).

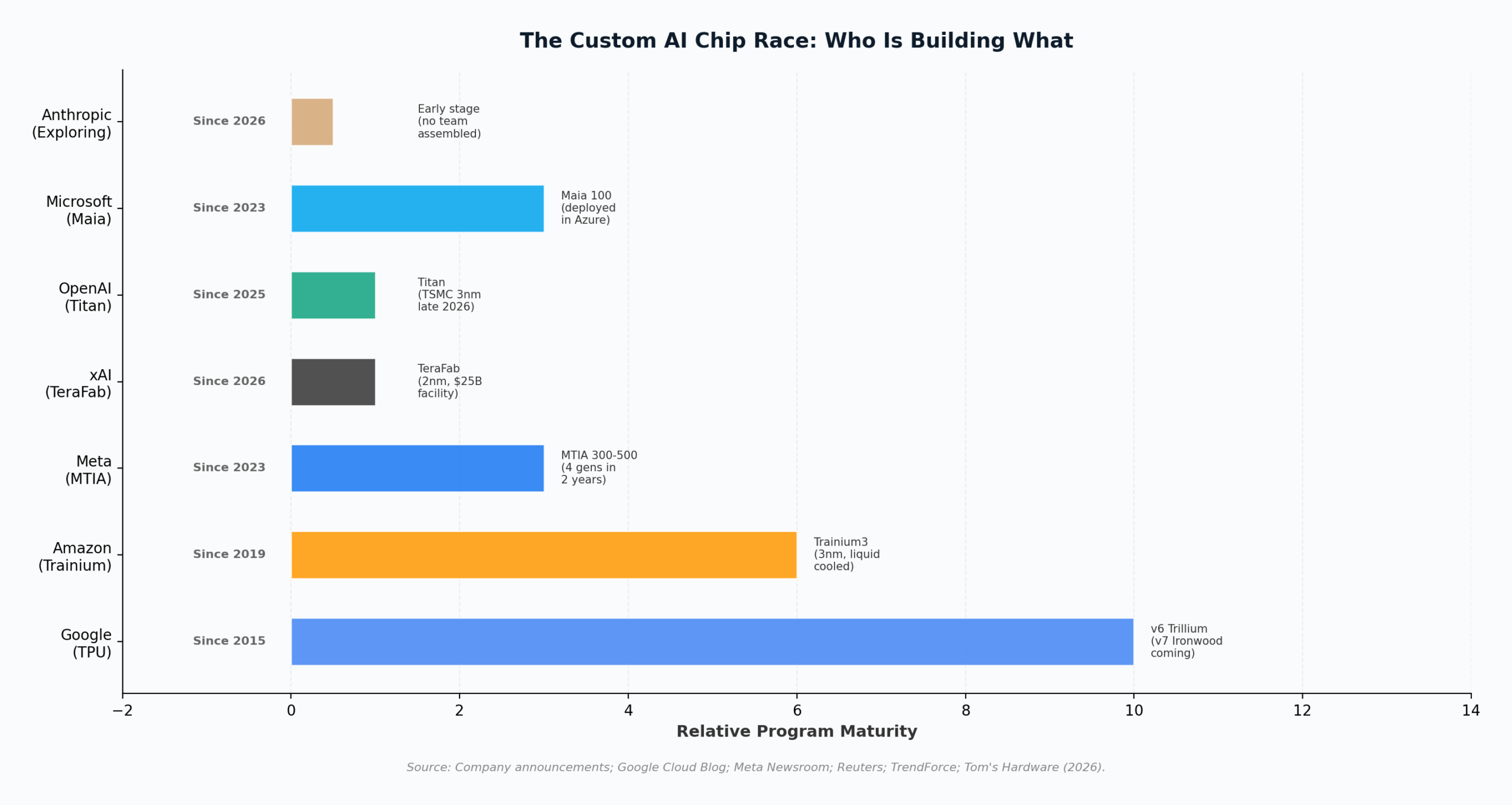

The Field: Who Is Already Building Custom Chips

Anthropic is joining a crowded field. Understanding the competitive landscape requires examining what each major player has already built or committed to building.

Google: The Pioneer

Google has the most mature custom chip program in the industry. Its Tensor Processing Units (TPUs) date back to 2015. The current sixth-generation Trillium (TPU v6) delivers a 4.7x increase in peak compute per chip over its predecessor, with doubled HBM capacity and bandwidth, and can scale to tens of thousands of chips in building-scale supercomputers (Google Cloud, 2024). The seventh-generation Ironwood TPU, built on Broadcom’s 2nm process, is expected in 2027. Google now licenses its TPU designs to external customers through Broadcom, as demonstrated by the Anthropic deal. Anthropic negotiated first claim on 60% of all new TPUs produced over the next five years, along with the ability to request modifications to the chip’s mathematical processing units (IO+, 2026).

Amazon: The Vertical Integrator

Amazon Web Services debuted its custom AI silicon in 2019 with the first Inferentia inference chip. The program has accelerated dramatically: Trainium3, launched in late 2025, is a 3nm liquid-cooled chip manufactured by TSMC that delivers 2.52 petaflops of FP8 compute per chip, with 144 GB of HBM3e memory and 4.9 TB/s of memory bandwidth (AWS, 2026). Amazon claims Trainium-based instances cut AI training and inference costs by up to 50% compared to equivalent GPU setups. The Trainium3 UltraServer configuration packs up to 144 chips into a single system, delivering 362 petaflops. Anthropic, as Amazon’s largest AI customer, is already a major Trainium deployer (Neuro Technus, 2026).

Meta: The Fastest Mover

Meta announced in March 2026 that it is developing four new generations of its Meta Training and Inference Accelerator (MTIA) chips within two years, a pace that shatters industry norms of one new generation every 12 to 24 months (Meta Newsroom, 2026). The MTIA 300 is already in production for ranking and recommendation workloads. The MTIA 400, 450, and 500 will progressively target GenAI inference, with the MTIA 500 scheduled for 2027 offering 80% more memory capacity than its predecessor. Meta’s strategy explicitly prioritizes inference optimization over training, reflecting the anticipated explosion in inference demand as AI agents become mainstream.

xAI: The Most Radical Approach

Elon Musk’s xAI launched TeraFab in March 2026, a $25 billion chip fabrication facility at Giga Texas that aims to produce 2nm chips in-house (Tom’s Hardware, 2026a). The facility will consolidate chip design, lithography, fabrication, memory production, advanced packaging, and testing under one roof. TeraFab will produce inference chips for Tesla vehicles and Optimus robots, plus space-hardened processors for orbital AI satellites. Musk estimated that 80% of TeraFab’s output would be directed toward space-based compute. Meanwhile, xAI’s Colossus data center in Memphis already operates 555,000 Nvidia GPUs across 2 gigawatts of capacity, making it the world’s largest single-site AI training facility (Introl, 2026).

OpenAI: The Late but Determined Entrant

OpenAI is developing its first custom AI chip, codenamed “Titan,” using TSMC’s 3nm process with design assistance from Broadcom. Mass production is targeted for the second half of 2026, with a second-generation version (Titan 2) planned on TSMC’s more advanced A16 process (TrendForce, 2026). The chip design is led by Richard Ho, a former Google engineer who helped lead Alphabet’s TPU program. OpenAI has indicated that custom ASICs and general-purpose GPUs will coexist in its infrastructure, with the initial chip focused on inference workloads.

| Company | Chip Program | Started | Current Generation | Primary Focus |

|---|---|---|---|---|

| TPU | 2015 | v6 Trillium (v7 Ironwood coming) | Training + Inference | |

| Amazon | Trainium / Inferentia | 2019 | Trainium3 (3nm, liquid cooled) | Training + Inference |

| Meta | MTIA | 2023 | MTIA 300 (4 gens in 2 years) | Inference first |

| xAI | TeraFab | 2026 | 2nm fab (inference + space) | Edge inference + orbital AI |

| OpenAI | Titan | 2025 | Titan (TSMC 3nm, late 2026) | Inference |

| Microsoft | Maia | 2023 | Maia 100 (deployed in Azure) | Inference |

| Anthropic | Exploring | 2026 | No design or team yet | TBD |

What This Means for AI

The proliferation of custom AI silicon across every major AI company reshapes the technology landscape in several fundamental ways.

AI development becomes less supply-constrained. The single biggest bottleneck in AI advancement over the past three years has been access to high-performance chips. When every frontier AI company depends on one supplier operating at maximum capacity, chip allocation becomes a geopolitical and corporate power dynamic rather than a market transaction. Custom chips diversify the supply chain, reducing the risk that any single company’s production delays can stall industry-wide progress.

Workload-specific optimization accelerates. General-purpose GPUs are, by definition, designed for general purposes. Custom chips can be optimized for the specific mathematical operations, memory access patterns, and data flows that each company’s models require. Meta’s MTIA chips are explicitly designed for inference workloads first, the opposite of the GPU approach that optimizes for training and applies training hardware to inference at lower efficiency (Meta Newsroom, 2026). This specialization trend means that the same dollar of silicon investment can deliver more useful computation when directed at a specific workload.

The software ecosystem fragments. Nvidia’s most durable competitive advantage has never been raw hardware performance but CUDA, its software platform that has become the default development environment for AI research. As custom chips proliferate, the AI software stack fragments: Google uses JAX and XLA for TPUs, Amazon has its Neuron SDK for Trainium, and Meta uses PyTorch with custom MTIA kernels. This fragmentation raises switching costs and creates ecosystem lock-in effects that benefit the largest companies at the expense of smaller developers and researchers (Introl Blog, 2026).

Key Insight

The custom chip movement is fundamentally an inference story. Training frontier models still requires Nvidia’s GPUs at scale because the CUDA ecosystem and NVLink interconnect fabric remain unmatched for distributed training. But inference, the process of running trained models to serve users, is where cost reduction matters most and where custom silicon excels. As AI shifts from a training-dominated to an inference-dominated workload, the strategic value of custom chips increases dramatically.

What This Means for the Economy

The collective investment in custom AI silicon now exceeds $100 billion across the companies profiled above. These commitments are reshaping the economic structure of the technology industry and creating ripple effects across manufacturing, energy, and labor markets.

AI infrastructure becomes a domestic industrial policy priority. Anthropic’s $50 billion commitment is explicitly directed at U.S. computing infrastructure, with data centers planned across Ohio, Iowa, Texas, and Georgia. xAI’s TeraFab is in Austin, Texas. These investments create thousands of construction, engineering, and operations jobs in regions that have not traditionally been technology hubs. The fact that these facilities require gigawatt-scale power is driving parallel investments in energy infrastructure, including renewable generation and grid upgrades (IO+, 2026).

AI costs are coming down, expanding the addressable market. Custom chips are fundamentally a cost reduction strategy. When Amazon says Trainium-based instances cost 50% less than equivalent GPU setups, that price reduction flows through to every AWS customer building AI applications (Neuro Technus, 2026). Lower inference costs mean more companies can afford to deploy AI at scale, expanding the addressable market for AI services. This is the same dynamic that made cloud computing mainstream: as unit economics improved, the customer base expanded far beyond the original early adopters.

Broadcom and TSMC emerge as kingmakers. While the narrative focuses on AI companies versus Nvidia, the less visible but equally important story is the rise of Broadcom as the go-to ASIC design partner and TSMC as the indispensable manufacturer. Broadcom confirmed it is in long-term agreements to develop and supply custom TPUs for Google and Anthropic, with AI chip revenue projections potentially exceeding $100 billion by 2027 (IO+, 2026). TSMC manufactures chips for Amazon (Trainium3), OpenAI (Titan), and most other custom silicon programs. These two companies sit at the nexus of the custom chip revolution, profiting regardless of which AI company wins.

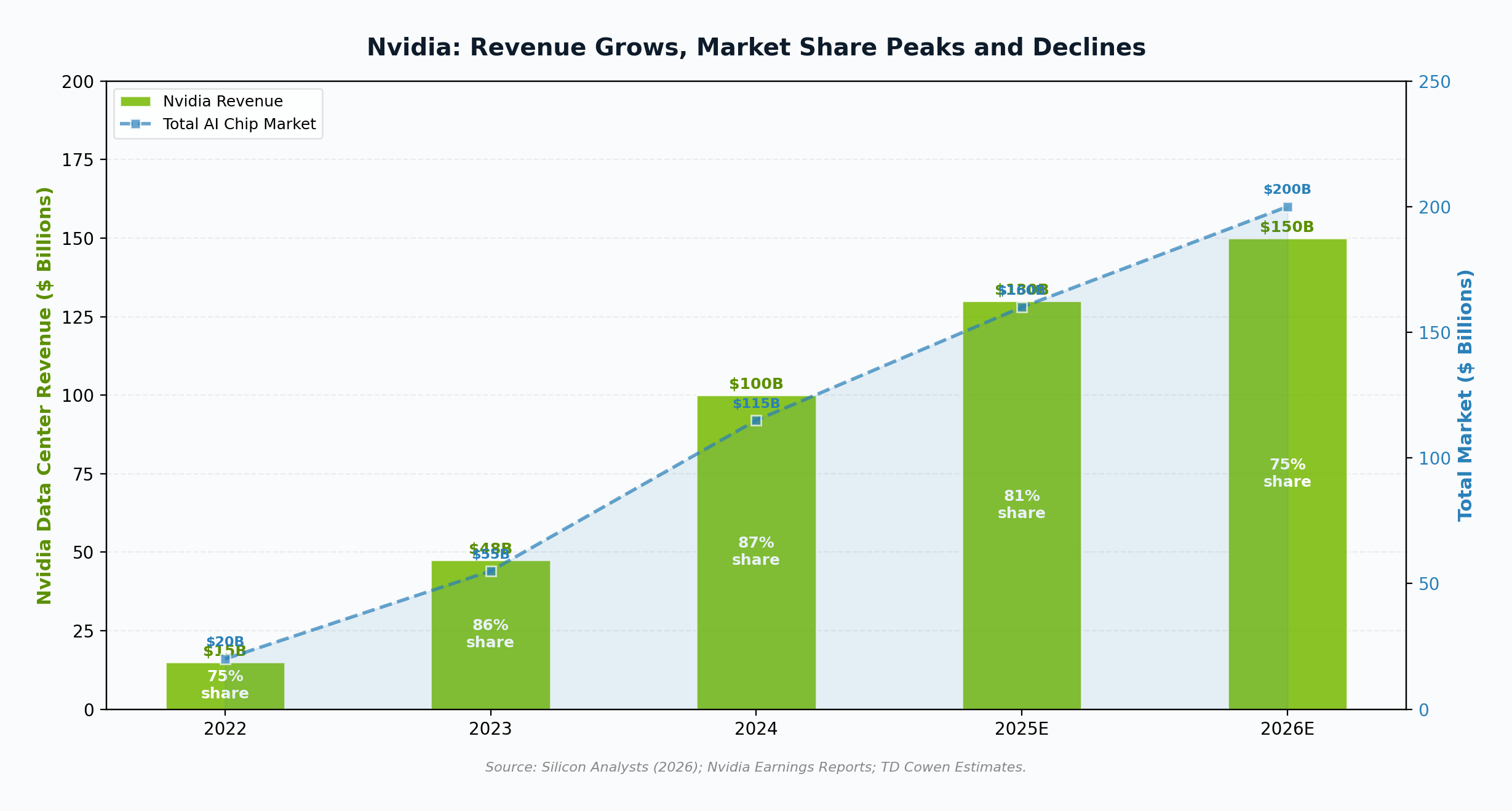

What This Means for Nvidia

The most important nuance in the Nvidia story is that the company’s revenue continues to grow even as its market share declines. Nvidia’s data center revenue expanded from $15 billion in 2022 to over $100 billion in 2024, and is projected to reach $150 billion or more in 2026 (Silicon Analysts, 2026). The AI accelerator market is growing so fast that even losing 12 percentage points of share since 2024 still translates to $50 billion in incremental annual revenue.

Training remains Nvidia’s stronghold. Custom chips have made the most progress in inference, where workloads are more predictable and easier to optimize. For frontier model training, which requires massive distributed computing with thousands of GPUs communicating simultaneously, Nvidia’s NVLink interconnect and CUDA software ecosystem remain effectively unrivaled. Even xAI, which is building its own chip fab, purchased 555,000 Nvidia GPUs at approximately $18 billion for Colossus (Introl, 2026). The custom chip movement is, for now, a complement to Nvidia’s training business rather than a replacement.

Nvidia is responding aggressively. The Vera Rubin platform, announced at CES 2026, combines a custom Vera CPU with next-generation Rubin GPUs and a specialized Rubin CPX context processing accelerator. The system delivers 7.5x the AI performance of current Blackwell systems, with 600kW rack-scale deployments planned for 2027 (NVIDIA Newsroom, 2026). Nvidia is also building its own specialized co-processors (CPX) for niche workloads like long-context attention, directly targeting the use cases that custom ASIC designers have focused on. TD Cowen projects that custom chips’ share of the AI market will only rise from 10% to 15% by 2030, and that Nvidia will retain roughly 90% of the GPU market even as the total market more than triples (TD Cowen via Reddit, 2025).

The real risk is long-term margin compression. Nvidia’s extraordinary profitability (over 70% gross margins on data center products) depends on being the only viable option for most AI workloads. As custom chips mature and cloud providers offer increasingly competitive alternatives, Nvidia may face pressure to reduce pricing to defend volume. This would still leave the company profitable, but the era of near-monopoly pricing power may be approaching its end.

The Bottom Line

Anthropic’s exploration of custom chips is not a single company making a hardware decision. It is the final confirmation of an industry-wide structural shift. When every major AI company, from the most established (Google, Amazon) to the newest (Anthropic, xAI), is independently concluding that building custom silicon is necessary for competitive survival, the message is unambiguous: the era of total dependence on one chip supplier is ending. What replaces it will not be the elimination of Nvidia but the emergence of a multi-vendor, multi-architecture AI compute ecosystem where software optimization, vertical integration, and workload-specific design matter as much as raw hardware performance. The AI chip race is not a zero-sum game. The market is growing fast enough to reward multiple winners. But the balance of power is shifting, and the companies that control their own silicon will increasingly control their own destiny.

References

Amazon Web Services. (2026). AI accelerator: AWS Trainium. https://aws.amazon.com/ai/machine-learning/trainium/

Google Cloud. (2024, October 30). Trillium sixth-generation TPU is in preview. https://cloud.google.com/blog/products/compute/trillium-sixth-generation-tpu-is-in-preview

India Today. (2026, April 10). Anthropic may build its own chips to power Claude AI. https://www.indiatoday.in/technology/news/story/anthropic-may-build-its-own-chips-2894152-2026-04-10

Introl. (2026, January 3). xAI Colossus hits 2 GW: 555,000 GPUs, $18B, largest AI site. https://introl.com/blog/xai-colossus-2-gigawatt-expansion-555k-gpus-january-2026

Introl. (2026, January 31). Google TPU vs Nvidia GPU: Infrastructure decision framework 2025. https://introl.com/blog/google-tpu-vs-nvidia-gpu-infrastructure-decision-framework-2025

Investing.com. (2026, April 9). Anthropic weighs building its own AI chips. https://www.investing.com/news/stock-market-news/anthropic-weighs-building-its-own-ai-chips-reuters-4606984

IO+. (2026, April 9). How Anthropic and Google plan to challenge Nvidia’s AI dominance. https://ioplus.nl/en/posts/how-anthropic-and-google-plan-to-challenge-nvidias-ai-dominance

Meta Newsroom. (2026, March 11). Expanding Meta’s custom silicon to power our AI workloads. https://about.fb.com/news/2026/03/expanding-metas-custom-silicon-to-power-our-ai-workloads/

Neuro Technus. (2026, March 23). AWS Trainium vs Nvidia: Inside Amazon’s custom silicon lab. https://neurotechnus.com/2026/03/23/aws-trainium-nvidia-challenge/

NVIDIA Newsroom. (2026, January 5). NVIDIA kicks off the next generation of AI with Rubin. https://nvidianews.nvidia.com/news/rubin-platform-ai-supercomputer

Silicon Analysts. (2026, February 21). Nvidia GPU market share 2024-2026: 87% peak, what comes next. https://siliconanalysts.com/analysis/nvidia-ai-accelerator-market-share-2024-2026

Silicon Republic. (2026, April 10). Anthropic reportedly mulls designing own chips amid shortage. https://www.siliconrepublic.com/machines/anthropic-reportedly-mulls-designing-own-chips-amid-shortage

TechCrunch. (2026, April 7). Anthropic ups compute deal with Google and Broadcom amid surging demand. https://techcrunch.com/2026/04/07/anthropic-compute-deal-google-broadcom-tpus/

Tom’s Hardware. (2026a, March 22). Elon Musk formally launches $20 billion TeraFab chip project. https://www.tomshardware.com/tech-industry/elon-musk-formally-launches-20-billion-terafab-chip-project

TrendForce. (2026, January 15). OpenAI reportedly to deploy custom AI chip on TSMC N3 by end-2026. https://www.trendforce.com/news/2026/01/15/news-openai-reportedly-to-deploy-custom-ai-chip-on-tsmc-n3-by-end-2026